The teething problems with Waymo self-driving cars

There's a bombshell report on The Information (paywall) today that details just how difficult self-driving cars are, despite whatever positive spin they put on it.

What's most annoying, it seems, is living among crappy robot drivers that are trying to learn how to drive:

Ms. Cusick and other workers shared similar stories of waiting for minutes at a time behind Waymo vans as they struggled to turn left through the first intersection the vehicles encounter when they leave their depot.

The report says that the Waymo vehicles are programmed to follow every road rule precisely, causing problems on the road because they're better at following the rules than their human counterparts:

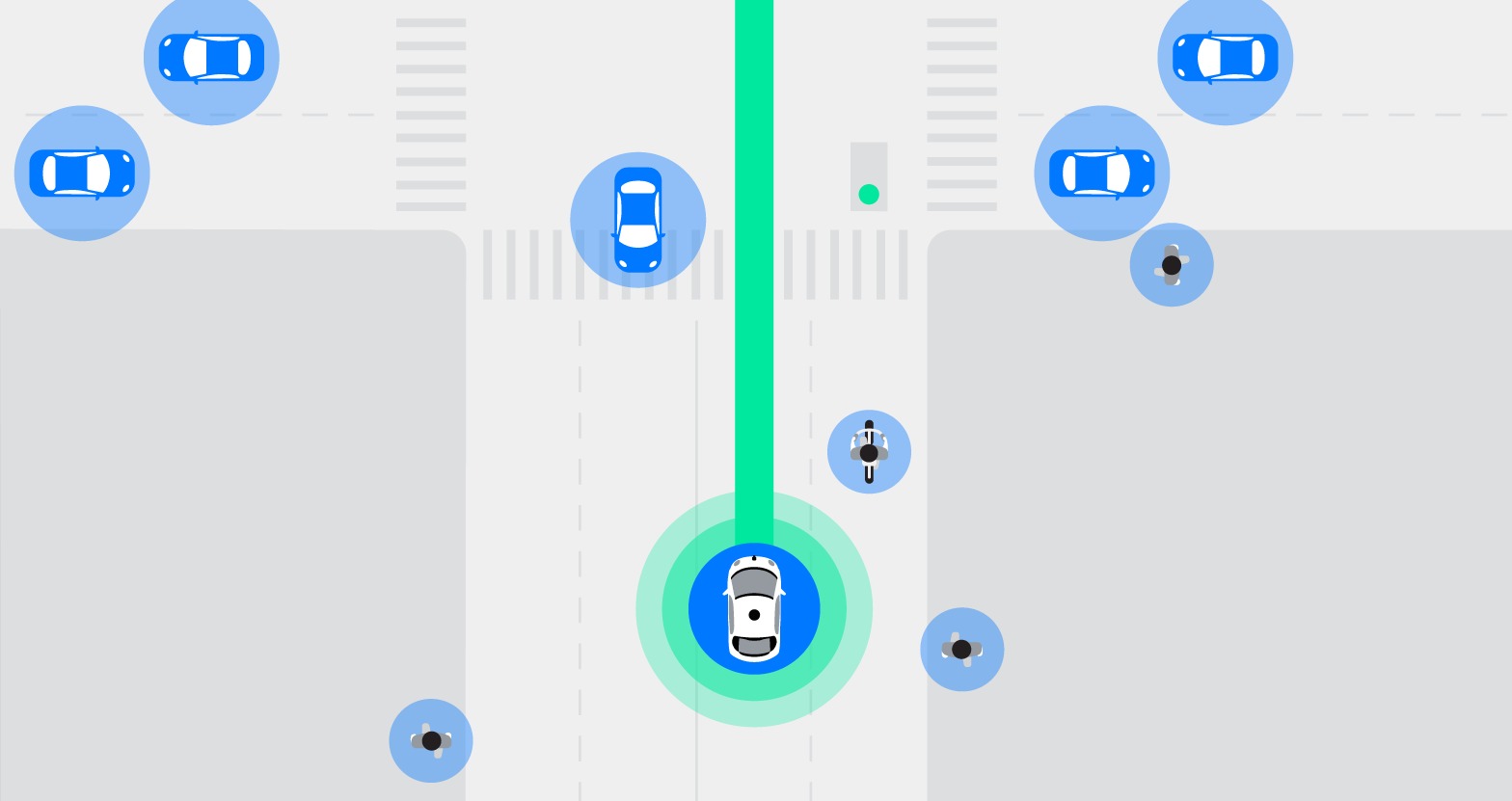

The biggest issue for Waymo’s vans and other companies’ prototypes is human drivers or pedestrians who fail to observe traffic laws. They do so by speeding, by not coming to complete stops, by turning illegally, texting while driving, or with an endless array of other moving violations that have become an accepted part of driving. Waymo’s prototypes sometimes respond to these maneuvers by stopping abruptly in ways that human drivers don’t anticipate. As a result, human drivers from time to time have rear-ended the Waymo vans.

This has been a problem since the beginning of the program, and across the industry, it isn't new. Humans are unpredictable, and terrible at following rules, where robots are not — and the technology struggles to deal with that grey area.

Here's The New York Times from 2015 reporting on this:

But it can be tough to get around if you are a stickler for the rules. One Google car, in a test in 2009, couldn’t get through a four-way stop because its sensors kept waiting for other (human) drivers to stop completely and let it go. The human drivers kept inching forward, looking for the advantage — paralyzing Google’s robot.

Even in that original report, there's a great quote from Donald Norman, director of the Design Lab at the University of California, who said that “The real problem is that the car is too safe, they have to learn to be aggressive in the right amount.” Even more difficult, they must understand the complex, local culture of driving in each location.

Part of Waymo's problem today is that it's under pressure following Uber's self-driving accident, and deviating from the law in any way could cause political problems as attention is intensely focused on the industry right now.

As Benedict Evans points out on Twitter, "it's useless to say 'that tech doesn't work yet' as though it means it never will." Times change, and our expectations do too, as well as the way we use roads, devices or whatever else appears in our lives.

Any narrative that self-driving cars will be a perfect, utopian solution to road accidents is misleading, and The Information report really demonstrates that problem. I'm a big skeptic that self-driving cars will arrive in the timelines these companies allege, but even then, all they need to really do is drive better than us.

Tab Dump

Trump accuses Google of rigging search results

Yes, he's going after the search algorithm now, claiming that the republican media is "shut out" of results due to rigging. Google says it doesn't happen. He says it's a "serious situation" that will be "addressed". Another day in the White House, but a threat to technology companies like Google.

Intel's new CPUs are here

I know nobody else gets excited about chipsets, but these new CPUs are focused on better battery life and more performance out of less juice. If anything, it's a signal that a new MacBook Air could be close...

Microsoft's adding AI to the cloud

Some interesting new stuff for those still using Microsoft Office: free audio and video transcription are coming to OneDrive/SharePoint this year, a thing I wish every platform would do, because it means everything you've ever created and synced at an organization is searchable -- even that one video you can never find.

Read this: God is in the machine

A sobering story about the hidden realities of algorithms making decisions for companies.